|

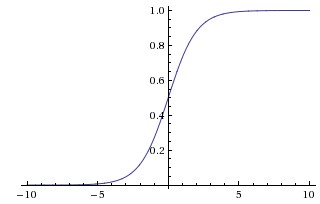

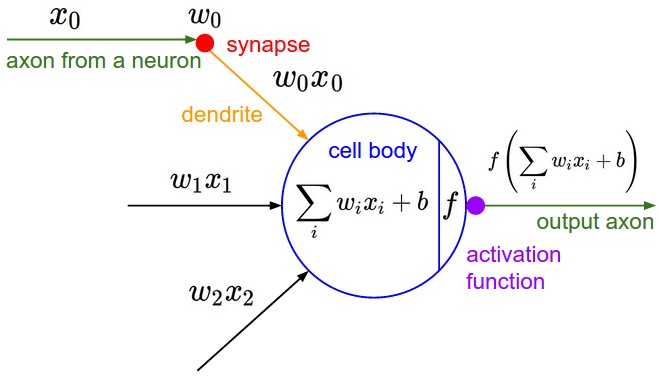

Softmax layer in a neural network. I feel a little bit bad about providing my own answer for this because it is pretty well captured by amoeba and juampa, except for maybe the final intuition about how the Jacobian can be reduced back to a vector. You correctly derived the gradient of the diagonal of the Jacobian matrix, which is to say that $ {\partial h_i \over \partial z_j}= h_i(1- h_j)\; \; \; \; \; \; : i = j $and as amoeba stated it, you also have to derive the off diagonal entries of the Jacobian, which yield$ {\partial h_i \over \partial z_j}= - h_ih_j\; \; \; \; \; \; : i \ne j $These two concepts definitions can be conveniently combined using a construct called the Kronecker Delta, so the definition of the gradient becomes$ {\partial h_i \over \partial z_j}= h_i(\delta_{ij}- h_j) $So the Jacobian is a square matrix $ \left[J \right]_{ij}=h_i(\delta_{ij}- h_j) $All of the information up to this point is already covered by amoeba and juampa. The problem is of course, that we need to get the input errors from the output errors that are already computed. Since the gradient of the output error $\nabla h_i$ depends on all of the inputs, then the gradient of the input $x_i$ is$[\nabla x]_k = \sum\limits_{i=1} \nabla h_{i,k} $Given the Jacobian matrix defined above, this is implemented trivially as the product of the matrix and the output error vector: $ \vec{\sigma_l} = J\vec{\sigma_{l+1}} $If the softmax layer is your output layer, then combining it with the cross- entropy cost model simplifies the computation to simply$ \vec{\sigma_l} = \vec{h}- \vec{t} $where $\vec{t}$ is the vector of labels, and $\vec{h}$ is the output from the softmax function. Not only is the simplified form convenient, it is also extremely useful from a numerical stability standpoint. I am using a Softmax activation function in the last layer of a neural network. But I have problems with a safe implementation of this function. A naive implementation would be this one: Vector y = mlp(x); // output of the. Neural Network Activation Functions in C#. that allow neural networks to model complex data. The softmax activation function is designed so that a return value is in the range.

Neural Network with softmax activation. Role of Bias in Neural Networks. 14. Implementation of a softmax activation function for neural networks. 4. Artificial Neural Networks/Activation Functions. From Wikibooks, open books for an open world < Artificial Neural Networks. Jump to: navigation, search. Artificial Neural Networks. The softmax activation function is given as. Lecture 14: Neural Networks Andrew Senior <[email protected]> Google NYC December 12, 2013. [Derivative of softmax activation function.] (19) @z i @w ij = x j (20) @L @w ij = X k y^ k y k y k( ik y i)x j (21) = x j X.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed